#010: The Art of Statistics

A good way to brush up your statistics and take a stroll through the field

The Art of Statistics: Learning from Data

by: David Spiegelhalter

Introduction

To answer questions, one must start defining well the problem and the concepts in questions.

Data has two limitations

It's an incomplete picture of the world. It can never represent the totality of scenarios

It may change depending on variables and context. We call this variability.

Data literary is the ability to do statistical analysis on real world problems and critique other analyzes.

"Inter ocular data" hits you between the eyes (i.e. very clear and obvious)

Framing is how you position the results (negative or positive). This affects comprehension in the target audience.

"5% Mortality rate vs 95% survival"

The PPDAC cycle

PPDAC (Problem Plan, Data, Analysis, Conclusion) is a cycle used to answer research questions

Problem: specifying and understanding the question. E.g. how many trees are there in the world?

Plan - create the process by which investigation will happen. What to measure and how? Recording, collecting? Design the investigation itself?

Data - Collection, management, cleaning

Analysis - sort data, tables+graphs, search for patterns, hypothesis generation

Conclusions - interpretation, conclusion, new ideas

Data Visualisation

3D pie charts are bad because they're confusing to understand the data and distort the areas of the graphs. Bar charts are easier to understand.

Relative disk: delta relative to a situation.

Useful: expected frequencies:

Instead of just looking at percentages, it’s sometimes useful to ask"What does it mean for 100 or 10,000 people? (e.g. 532 out of 10,000)" -> this helps the understanding of probabilities.

Wisdom of crowds - if you use as input the estimation or guesses of people (or many inputs) and average their responses. The book suggests the reader to guess a number of beans in a picture of a jar. The average of the responses is interestingly accurate.

What does 'average’ mean?

There are 3 interpretations of what average:

Mean = Sum(n)/Size(n)

Median = middle value where half the values are on one side and half is on the other side

Mode - The most common value

How to plot information?

Data visualization influences the interpretation of said data. To plot information well, one must make sure the visualization:

Contains reliable information

Is designed so that patterns become visible

Is attractive but clear and honest

Enables exploration

The first rule of communication is to shut up and listen. "Two ears and one mouth".

Numbers don't speak for themselves, you need to create intention behind every study and know what you want to achieve.

When you're analyzing a data set with a research question in mind, you're going from raw data -> Sample -> Population

In each stage, we may find limitations or problems in the data which can affect our conclusions.

Inductive inference

Inductive reasoning is a process by which we go from sampled data to create conclusions about a study or general population.

A few interesting points.

First, on the value of sampling:

If you have cooked a large pan of soup, you do not need to eat it all to find out if it needs more seasoning. You can just taste a spoonful, provided you have given it a good stir

George Gallup.

Second, if you want to be able to generalize from sample to population, you need to make sure your sample is representative. Induction is prone to errors and biases as study limitations can leads to conclusion that are not necessarily true.

Third, we have to be careful with ascertainment bias.

Ascertainment bias generally refers to situations in which the way data is collected is more likely to include some members of a population than others. This can happen when there is more intense surveillance or screening for the outcome of interest in certain populations. For example, one might find a higher rate of breast cancer in a richer population with easy access to mammography when compare to a poorer population with limited healthcare access.1

This bias happens when features of people being analyzed depends on their background. For example, wealthy people are more likely to be diagnosed with cancer because they get tested mode.

Fourth, it’s important to define what we think as statistical causation. The statistical idea of connection is not determinist. We think of causation as

X happens therefore Y will happen.

Causation rather means:

When we force X to happen, Y tends to happen more

The bell curve

The bell curve, Normal distribution or Gaussian distribution, explored by Carl Friedrich Gauss in 1809. It's defined by its mean, or expectation, and standard deviation, the measure of spread of the data. When mean and SD are applied to data they are called statistics while when they are applied to populations they are called parameters.

More information:

A Z-score is the number of standard deviations away from the mean.

Percentiles (such as 20%, 50% or 75%) are useful as well as quartiles (25%, 50%, 75%).

When we measure the areas in a probability distribution, we can say two things:

The proportion of the population/sample that lies in that range

The probability that an element chosen randomly will fall in that range.

A note on medical trials

Any medical trial should have the following attributes:

Controls: a group to compare against or a control group taking a placebo

Allocation of treatment: randomized selection of participants to be treated or not

People counted properly in each group: even if people didn't have the medication. It's called "intention to treat"

Blind: people don0t know which group they belong

Groups treated equally

Those assessing the final outcomes should not know which groups the subjects are in.

Measure everyone: follow up with everyone to understand all side effects

Multiple trials, don't rely on a single study to take conclusions

Review systematically

To properly study causation, randomization is encouraged.

Establishing causation in observational studies

[This is one of the most useful insights of this book as it can and should be applied to across disciplines and areas of work. When we try to answer questions and establish causation in our day to day jobs based on observing (rather than affecting) data, we can apply these principles to establish causation]

Studies without experimentation are said to be observational. Types of observational studies:

Prospective cohort study, which follows groups of people for a long time

Retrospective cohort study, which looks at past data of cohorts

Case control study, approximate variable to control groups and study

It’s important to introduce the idea of confounding factor. This factor exists when an apparent association between two outcomes might be better explained by a common confounding factor that influences both. This means that the true cause is not the initially correlated one but a third factor. Example: any correlation between ice cream sales and drownings can be explained by the weather which influences both.

Can we conclude causation from observational data?

There are direct, mechanistic and parallel evidence in order to establish causation from anecdotal evidence.

Direct evidence

Size of the effect is so large that is can't be explained by confounding factors

Appropriate temporal and/or spatial proximity, in that cause precedes effect and effect occurs after a plausible interval

Dose responsiveness and reversibility: effect is larger if exposure increases. And even better if effect reduces if exposure stops.

Mechanistic evidence

4. Plausible mechanism of action- external evidence for causal chain (i.e. it’s not contradicted by current science or is similar to known effects)Parallel evidence

5. Effect fits in what is known already

6. Effect is found when study is replicated

7. Effect is found in similar studies

These criteria are by no means a sure process (ideally you’d have randomization and a way to create trials).

Correlation and regression

To correlate, one relates two measures.

Best fit prediction tries to find the line with the amount of smallest residuals (i.e. distance between points and the line). Least squares minimizes the distance of all points to the line.

In regression, the dependent variable is the quantity that we want to predict, also called the response variable. The independent variable is the x-axis or explanatory variable.

The gradient, aka regression coefficient (y = b0 + b1x ), is the slope which defines both the direction and inclination of the line. The intercept is the moment when x = 0.

The British polymath Sir Francis Galton identified that, by mapping the heights of sons and fathers, taller fathers then to have smaller sons and smaller fathers tend to have taller sons. Galton called this "regression to mediocrity" or regression to the mean. If we toss a coin multiple times, it's possible that we get 8 or 9 heads in a row. But given enough time, it will regress to the mean of 50%.

A model is a representation of some aspect of the world which is based on simplifying assumptions. Observation = deterministic model (signal) + residual error (Noise).

Multiple linear regression: when we use > 1 explanatory variable.

There are 4 main modeling strategies:

Simple math - e.g. linear regression - used by statistician

Deterministic models - e.g. weather forecasting - used by applied mathematicians

Algorithms - to make decisions like Machine Learning - used by Data scientists

Regression models - used by economists

Important to remember:

Models are Maps, not the territory.

All models are wrong. Some are useful

George Box

Algorithms and Machine learning

Supervised learning - classification

Unsupervised learning - clustering

Sensitivity: the correct percentage of true positives

Specificity: the correct percentage of true negative.

Algorithms that give a probability instead of a category are called Receiver Operating Characteristics (ROC) curves.

To forecast the weather given the large amount of variables, forecasters use many models in different places. If, out of 50 models, 5 say it will rain, we can assume a 10% probability of rain. This is connected to Ensemble methods. Ensemble methods are a machine learning techniques that combine several base models in order to produce one optimal predictive model .

Calibration: check recalibration of probabilities by checking the events.

A skill score is a comparison between two statistical methods to calculate improvement.

Overfitting

Overfitting: increasing the sophistication of models (to have more parameters). E.g. in the case of decision trees, we could have more deciding branches. As a consequence we might start to see noise.

We overfit when we overspecialize a model for local circumstances. Overfitting does remove bias at the cost of variance. Intuition: overfitting is adding more rules to a model.

The best way to create a decision tree is to first create a very deep tree and then prune the branches using complexity parameters. So we deliberately overfit the trees and prune it to create a more robust model.

To protect against overfitting: cross validation. Cross validation happens when you remove 10% of the training data, develop the model using 90% and test on the removed 10%. Do this 10x = tenfold cross validation.

More techniques

Random forest: create a large number of trees and vote on the final classification (bagging)

Support Vector Machines

Neural Networks

K-Nearest-Neighbor

When choosing an algorithm, we must use a "good enough" mantra. Creating very complex models might end up not being used (e.g. $1m prize from Netflix to find the best recommendation algo and they ended up not using it because it was too complex)

Better to have something simple and with good enough accuracy than perfect but indecipherable.

Sampling

The size of our sample influences how we look at the world.

Problem: our samples may not reflect completely the population.

Solution: take samples from the sample

Bootstrapping consists in iterative resample from our sample. It's a method for when we have limited data.

This leads to the Central Limit Theorem: the distribution of sample means tends towards a normal distribution with increased sample size. I.e. regardless of the underlying distribution, when we you resample means from the main sample, this will tend towards a normal distribution.

Bootstrapping can be used to create confidence interval since you are taking N samples from your sample. Normally you'd communicate the 95% confidence level.

Probability theory

A useful technique to understand the likelihood of scenarios is through the expected frequency which is what happens if you tried and experiment the events in question a number of times.

For example, in the example of throwing a coin twice, the probability of hitting tails consequently is 1/4

Prosecutor's fallacy: P(A|B) != P(B|A), if a DNA test has an error rate of only 90%, it doesn't mean that there's a 90% probability a man is guilty.

There are several ways in which probability could be interpreted.

Classical probability

Enumerative

Long run

Subjective

The author claimed that there is not true probability except a subjective view on things.

Probability theory can be applied when data point is generated. But sometimes the data point is chosen by a random device. And much of the time, when there is no randomness, we act as if the data was generated.

When we want to treat data as if it was random and we're dealing with large number of data, we can use the Poisson Distribution which only relies on average. The Poisson expresses the probability of a given number of events occurring in a fixed interval of time or space if these events occur with a known constant mean rate and independently of the time since the last event. When we're seeing repeated independent events and we're counting the times something happens, the Poisson is useful.

A funnel plot is used to create control limits "guard rail" to project normal or probable events vs outliers.

Bernoulli trial: event with a binary outcome.

The Gambler’s fallacy

Gambler's fallacy: the idea that streaks of unkept events will be compensated by opposing future events. Example: in a roulette there has been a streak of 'red', leading to believe 'black' is likely to happen.

There is no compensation in statistics. What happens over time is that 'unlikeliness' gets overwhelmed the sheer power of the law of large numbers. Over time, you don't compensate what has happened. But you can promise that, in the future, it will tend towards the expected probability.

There are two types of uncertainty:

Aleatory uncertainty - the chance of an unpredictable event, measured a priori

Epistemic uncertainty- our personal ignorance about an event that is fixed (posteriori) but unknown

A confidence interval is the range of the population parameters for which is the observed statistics is a plausible consequence.

When doing surveys the standard sample size is 1000. The margin of error is then +-100/sqrt(1000) = +-3%

Hypothesis testing

Hypothesis testing enables us to ask and answer questions. In order to answer questions, we form a null hypothesis which is a negation of our question.

In other words, you make an accused guilty but you can’t guarantee his innocence (you're not found innocent, you're found not guilty). This is the same idea behind detecting cancer. You can't prove someone does not have cancer. But if you are thorough in searching for true positive cancer, you could be satisfied with it being falsified if you can't prove it.2

P-value: the lower the better. The p-value is the area of the long tail (if it is one sided). It's the probability of getting a result at least as extreme as we did if the null hypothesis were really true. If the p-value is small enough we can say that the results are statistically relevant. A small p-value will mean that the event of an extreme result is very unlikely, validating the null hypothesis testing. This is called Null Hypothesis Significant Testing. Any p-value between 0.05 and 0.01 is good.

Intuition to understand p-values: how surprised are we with the opposite of what we want to prove?

T-value is estimate/standard value: is says whether the association between an explanatory variable and response is statistically significant. The greater the t-value, the greater the evidence against the null hypothesis (as opposed to the p-value).

So when you have statistically significant results, you see high t-values and low p-values (sometimes represented as '***' in studies).

The problem with arbitrarily low p-values is that they can create false positives. For a drug known to not work, the p-value of 0.01 for a test of 1000 gives 10 false positives. The Bonferroni correction was created which is 0.05/n in which n is the size of the tests.

The use of p-values is variate and abused. In research, there are 4 principles for p-values

P-values indicate how incompatible the data are with a statistical model.

P-value is not the Probability the hypothesis is true.

Scientific, Business or Policy conclusions should not be based only on whether a p-value passes a threshold.

Proper inference requires full reporting and transparency

A p-value does not measure the size of an effect or importance of a result.

P value alone is insufficient for a good measure of evidence.

Baye's Theorem

Baye's theorem:

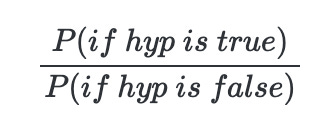

Where likelihood ratio is

Baye's theorem essentially assumes a prior when analyzing future events. Baye's theorem is forbidden to be used in UK courts, because it assumes previously defined probabilities. However, likelihood ratios are used in courts to demonstrate the power of evidence. And they have a verbal equivalent (e.g. weak support). They are also composable.

So while one piece of evidence may be weak, the composition may lead to very large likelihood. Here are a scale and interpretation of likelihood ratios:

- 1 - 10 - Weak

- 10 - 100 - Moderate

- 100 - 1000 - Moderate strong

- 1000 - 10k - Strong

- 10k - 100k - Very strong

- 100k - 1m - Extremely strong Baye’s theorem is the right way to change your mind on the basis of new evidence. It's a formal tool to use background knowledge to make realistic inferences.

Bayesian analysis uses a priori information combined with likelihood and creates a posteriori distribution.

MRP - Multilevel regression and pot-stratification. This has good results in predicting elections.

Reproducible crisis

The Reproducibility Project found that while 96% of studies had statistically significant results, only 36% of the replications did.

Instead of 'Discoveries', studies should focus on size of effect. The Rep Project found that during replication the effects had half of the magnitude.

One of the worst forms of bad practice is cherry picking the statistical tests that show good results. Statistical fraud can be detected using statistical science.

Cyril Burt, who researched the heritability of IQ, was posthumously accused of fraud. He was accused of making the data up.

One way to reduce the chances of false discoveries is to create more structure in scientific departments (like a press department) to make checks and balances and to communicate effectively and accurately the information.

If we became too strict with our research methods, we'll also reduce the exploration. So the best practice is to enable two types of studies:

Exploratory - more lenient

Confirmatory - more strict with statistical inferences

On being trustworthy

One cannot ask to be trusted. Trust is something given as a result of consistent behaviors. To demonstrate the trustworthiness of their work.

Onora O'Neill says that instead of asking to be trusted, one should try to be trustworthy. To be trustworthy, one must be:

Honest

Competent

Reliable

We prove our trustworthiness through intelligible evidence.

Ultimately, you have to have these traits in your studies in order to optimize for trust and reproducibility:

Accessible - people need to access info

Intelligible: comprehension/understanding of the information

Assessable: people should be able to validate the work

Usable: people should be able to leverage the information for their needs

That’s it! Congrats on getting through this summary.

See you next time.

If you like book summaries, you can receive them per email:

https://first10em.com/ebm/ascertainment-bias/

Taleb, Nassim Nicholas. The black swan: The impact of the highly improbable. Vol. 2. Random house, 2007.